Yuewen Group is a global leader in online literature and IP operations. Through its overseas platform WebNovel, it has attracted about 260 million users in over 200 countries and regions, promoting Chinese web literature globally. The company also adapts quality web novels into films, animations for international markets, expanding the global influence of Chinese culture.

Today, we are excited to announce the availability of Prompt Optimization on Amazon Bedrock. With this capability, you can now optimize your prompts for several use cases with a single API call or a click of a button on the Amazon Bedrock console. In this blog post, we discuss how Prompt Optimization improves the performance of large language models (LLMs) for intelligent text processing task in Yuewen Group.

Evolution from Traditional NLP to LLM in Intelligent Text Processing

Yuewen Group leverages AI for intelligent analysis of extensive web novel texts. Initially relying on proprietary natural language processing (NLP) models, Yuewen Group faced challenges with prolonged development cycles and slow updates. To improve performance and efficiency, Yuewen Group transitioned to Anthropic’s Claude 3.5 Sonnet on Amazon Bedrock.

Claude 3.5 Sonnet offers enhanced natural language understanding and generation capabilities, handling multiple tasks concurrently with improved context comprehension and generalization. Using Amazon Bedrock significantly reduced technical overhead and streamlined development process.

However, Yuewen Group initially struggled to fully harness LLM’s potential due to limited experience in prompt engineering. In certain scenarios, the LLM’s performance fell short of traditional NLP models. For example, in the task of “character dialogue attribution”, traditional NLP models achieved around 80% accuracy, while LLMs with unoptimized prompts only reached around 70%. This discrepancy highlighted the need for strategic prompt optimization to enhance capabilities of LLMs in these specific use cases.

Challenges in Prompt Optimization

Manual prompt optimization can be challenging due to the following reasons:

Difficulty in Evaluation: Assessing the quality of a prompt and its consistency in eliciting desired responses from a language model is inherently complex. Prompt effectiveness is not only determined by the prompt quality, but also by its interaction with the specific language model, depending on its architecture and training data. This interplay requires substantial domain expertise to understand and navigate. In addition, evaluating LLM response quality for open-ended tasks often involves subjective and qualitative judgements, making it challenging to establish objective and quantitative optimization criteria.

Context Dependency: Prompt effectiveness is highly contigent on the specific contexts and use cases. A prompt that works well in one scenario may underperform in another, necessitating extensive customization and fine-tuning for different applications. Therefore, developing a universally applicable prompt optimization method that generalizes well across diverse tasks remains a significant challenge.

Scalability: As LLMs find applications in a growing number of use cases, the number of required prompts and the complexity of the language models continue to rise. This makes manual optimization increasingly time-consuming and labor-intensive. Crafting and iterating prompts for large-scale applications can quickly become impractical and inefficient. Meanwhile, as the number of potential prompt variations increases, the search space for optimal prompts grows exponentially, rendering manual exploration of all combinations infeasible, even for moderately complex prompts.

Given these challenges, automatic prompt optimization technology has garnered significant attention in the AI community. In particular, Bedrock Prompt Optimization offers two main advantages:

- Efficiency: It saves considerable time and effort by automatically generating high quality prompts suited for a variety of target LLMs supported on Bedrock, alleviating the need for tedious manual trial and error in model-specific prompt engineering.

- Performance Enhancement: It notably improves AI performance by creating optimized prompts that enhance the output quality of language models across a wide range of tasks and tools.

These benefits not only streamline the development process, but also lead to more efficient and effective AI applications, positioning auto-prompting as a promising advancement in the field.

Introduction to Bedrock Prompt Optimization

Prompt Optimization on Amazon Bedrock is an AI-driven feature aiming to automatically optimize under-developed prompts for customers’ specific use cases, enhancing performance across different target LLMs and tasks. Prompt Optimization is seamlessly integrated into Amazon Bedrock Playground and Prompt Management to easily create, evaluate, store and use optimized prompt in your AI applications.

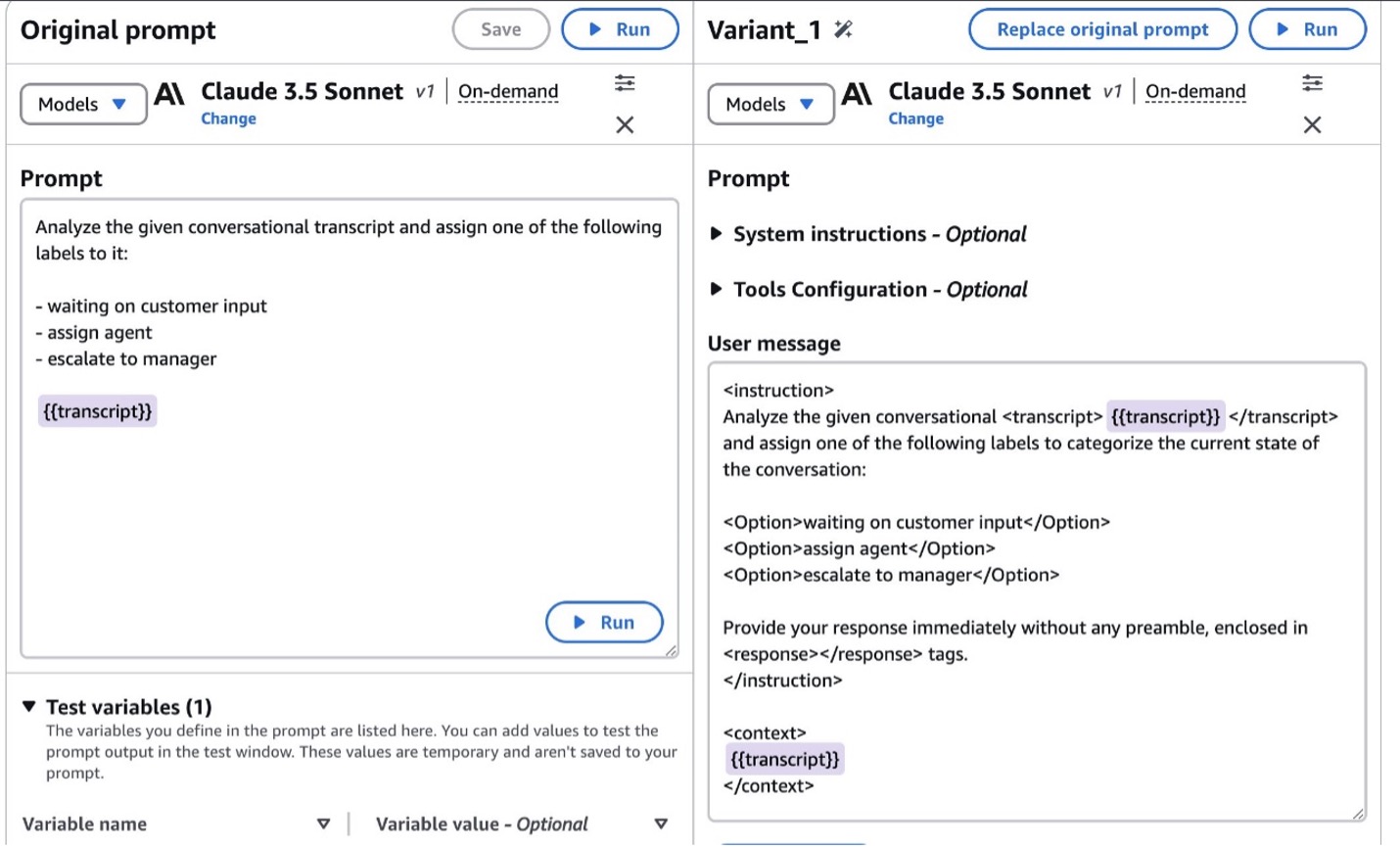

On the AWS Management Console for Prompt Management, users input their original prompt. The prompt can be a template with the required variables represented by placeholders (e.g. {{document}} ), or a full prompt with actual texts filled into the placeholders. After selecting a target LLM from the supported list, users can kick off the optimization process with a single click, and the optimized prompt will be generated within seconds. The console then displays the Compare Variants tab, presenting the original and optimized prompts side-by-side for quick comparison. The optimized prompt often includes more explicit instructions on processing the input variables and generating the desired output format. Users can observe the enhancements made by Prompt Optimization to improve the prompt’s performance for their specific task.

Comprehensive evaluation was done on open-source datasets across tasks including classification, summarization, open-book QA / RAG, agent / function-calling, as well as complex real-world customer use cases, which has shown substantial improvement by the optimized prompts.

Underlying the process, a Prompt Analyzer and a Prompt Rewriter are combined to optimize the original prompt. Prompt Analyzer is a fine-tuned LLM which decomposes the prompt structure by extracting its key constituent elements, such as the task instruction, input context, and few-shot demonstrations. The extracted prompt components are then channeled to the Prompt Rewriter module, which employs a general LLM-based meta-prompting strategy to further improve the prompt signatures and restructure the prompt layout. As the result, Prompt Rewriter produces a refined and enhanced version of the initial prompt tailored to the target LLM.

Results of Prompt Optimization

Using Bedrock Prompt Optimization, Yuewen Group achieved significant improvements in across various intelligent text analysis tasks, including name extraction and multi-option reasoning use-cases. Take character dialogue attribution as an example, optimized prompts reached 90% accuracy, surpassing traditional NLP models by 10% per customer’s experimentation.

Using the power of foundation models, Prompt Optimization produces high-quality results with minimal manual prompt iteration. Most importantly, this feature enabled Yuewen Group to complete prompt engineering processes in a fraction of the time, greatly improving development efficiency.

Prompt Optimization Best Practices

Throughout our experience with Prompt Optimization, we’ve compiled several tips for better user experience:

- Use clear and precise input prompt: Prompt Optimization will benefit from clear intent(s) and key expectations in your input prompt. Also, clear prompt structure can offer a better start for Prompt Optimization. For example, separating different prompt sections by new lines.

- Use English as the input language: We recommend using English as the input language for Prompt Optimization. Currently, prompts containing a large extent of other languages might not yield the best results.

- Avoid overly long input prompt and examples: Excessively long prompts and few-shot examples significantly increase the difficulty of semantic understanding and challenge the output length limit of the rewriter. Another tip is to avoid excessive placeholders among the same sentence and removing actual context about the placeholders from the prompt body, for example: instead of “Answer the {{question}} by reading {{author}}’s {{paragraph}}”, assemble your prompt in forms such as “Paragraph:\n{{paragraph}}\nAuthor:\n{{author}}\nAnswer the following question:\n{{question}}”.

- Use in the early stages of Prompt Engineering : Prompt Optimization excels at quickly optimizing less-structured prompts (a.k.a. “lazy prompts”) during the early stage of prompt engineering. The improvement is likely to be more significant for such prompts compared to those already carefully curated by experts or prompt engineers.

Conclusion

Prompt Optimization on Amazon Bedrock has proven to be a game-changer for Yuewen Group in their intelligent text processing. By significantly improving the accuracy of tasks like character dialogue attribution and streamlining the prompt engineering process, Prompt Optimization has enabled Yuewen Group to fully harness the power of LLMs. This case study demonstrates the potential of Prompt Optimization to revolutionize LLM applications across industries, offering both time savings and performance improvements. As AI continues to evolve, tools like Prompt Optimization will play a crucial role in helping businesses maximize the benefits of LLM in their operations.

We encourage you to explore Prompt Optimization to improve the performance of your AI applications. To get started with Prompt Optimization, see the following resources:

- Amazon Bedrock Pricing page

- Amazon Bedrock user guide

- Amazon Bedrock API reference

About the Authors

Rui Wang is a senior solutions architect at AWS with extensive experience in game operations and development. As an enthusiastic Generative AI advocate, he enjoys exploring AI infrastructure and LLM application development. In his spare time, he loves eating hot pot.

Rui Wang is a senior solutions architect at AWS with extensive experience in game operations and development. As an enthusiastic Generative AI advocate, he enjoys exploring AI infrastructure and LLM application development. In his spare time, he loves eating hot pot.

Hao Huang is an Applied Scientist at the AWS Generative AI Innovation Center. His expertise lies in generative AI, computer vision, and trustworthy AI. Hao also contributes to the scientific community as a reviewer for leading AI conferences and journals, including CVPR, AAAI, and TMM.

Hao Huang is an Applied Scientist at the AWS Generative AI Innovation Center. His expertise lies in generative AI, computer vision, and trustworthy AI. Hao also contributes to the scientific community as a reviewer for leading AI conferences and journals, including CVPR, AAAI, and TMM.

Guang Yang, Ph.D. is a senior applied scientist with the Generative AI Innovation Centre at AWS. He has been with AWS for 5 yrs, leading several customer projects in the Greater China Region spanning different industry verticals such as software, manufacturing, retail, AdTech, finance etc. He has over 10+ years of academic and industry experience in building and deploying ML and GenAI based solutions for business problems.

Guang Yang, Ph.D. is a senior applied scientist with the Generative AI Innovation Centre at AWS. He has been with AWS for 5 yrs, leading several customer projects in the Greater China Region spanning different industry verticals such as software, manufacturing, retail, AdTech, finance etc. He has over 10+ years of academic and industry experience in building and deploying ML and GenAI based solutions for business problems.

Zhengyuan Shen is an Applied Scientist at Amazon Bedrock, specializing in foundational models and ML modeling for complex tasks including natural language and structured data understanding. He is passionate about leveraging innovative ML solutions to enhance products or services, thereby simplifying the lives of customers through a seamless blend of science and engineering. Outside work, he enjoys sports and cooking.

Zhengyuan Shen is an Applied Scientist at Amazon Bedrock, specializing in foundational models and ML modeling for complex tasks including natural language and structured data understanding. He is passionate about leveraging innovative ML solutions to enhance products or services, thereby simplifying the lives of customers through a seamless blend of science and engineering. Outside work, he enjoys sports and cooking.

Huong Nguyen is a Principal Product Manager at AWS. She is a product leader at Amazon Bedrock, with 18 years of experience building customer-centric and data-driven products. She is passionate about democratizing responsible machine learning and generative AI to enable customer experience and business innovation. Outside of work, she enjoys spending time with family and friends, listening to audiobooks, traveling, and gardening.

Huong Nguyen is a Principal Product Manager at AWS. She is a product leader at Amazon Bedrock, with 18 years of experience building customer-centric and data-driven products. She is passionate about democratizing responsible machine learning and generative AI to enable customer experience and business innovation. Outside of work, she enjoys spending time with family and friends, listening to audiobooks, traveling, and gardening.